NFV Testing

Marie-Paule Odini, HPE

This article provides an overview of NFV Testing methodology and tools, covering work in standardization, in particular in ETSI NFV, and in Open Source with OPNFV.

The new challenges of NFV Testing

As network evolve towards NFV, the model of traditional monolithic network functions and single vendor solutions evolve to a set of virtualized functions from multiple vendors deployed on a virtualized infrastructure, with more and more dynamic and automatic lifecycle management. As such, traditional testing methodology and tools need also to evolve to cover the broader scope of configurations and live scenarios. Leveraging from different types of testing and methodologies as defined by ITU SG17 X.290 and ETSI EG 202 series such as:

- Pre-Validation Testing,

- Interoperability Testing,

- Conformance Testing,

- Performance Testing.

ETSI NFV has been working on few specifications specific to NFV Testing, so did IETF, but also OPNFV who has defined a number of tools to perform NFV Testing as part of the OPNFV Opensource project.

From testing monolithic product to testing NFV solution

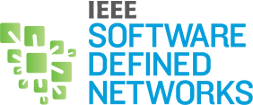

ETSI NFV has defined the terminology and architectural framework of NFV environment with 3 key entities: virtualized infrastructure (NFVI) that replace the traditional dedicated hardware, Virtual Network Functions (VNF) and Management and Orchestration (MANO), itself composed of 3 components: a component to manage the infrastructure (VIM), a component to manage the virtualized functions (VNFM), and an orchestrator (NFVO), as defined in Fig 1.

Fig 1. ETSI NFV Architectural Framework – source ETSI NFV GS NFV 002

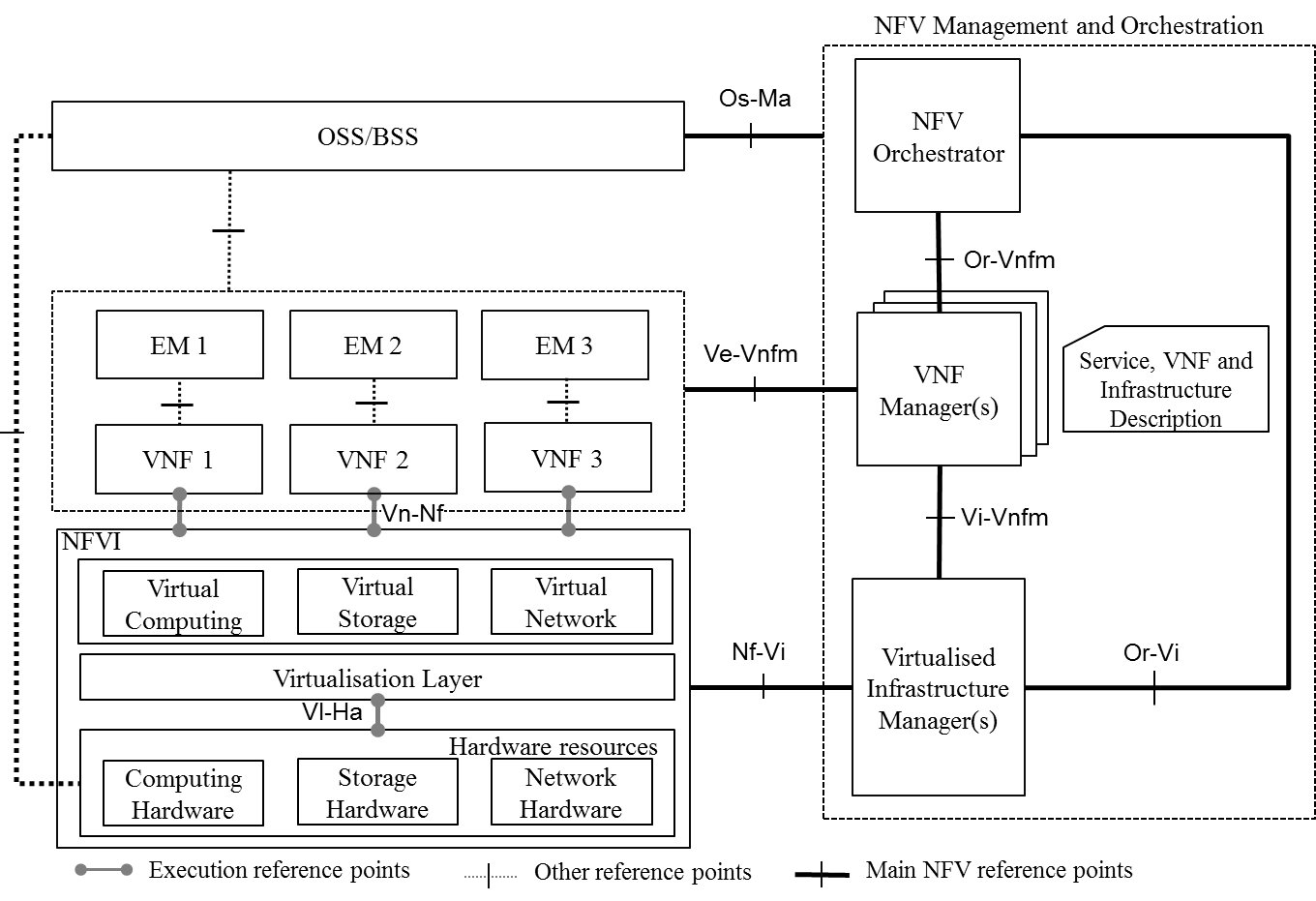

Testing is now evolving from testing monolithic products that include dedicated hardware and vendor software, to a solution where the hardware has moved to a virtualized infrastructure often provided by the Service Provider as a managed telco cloud, representing the ETSI NFV NFVI+VIM, and a set of virtualized functions called VNF by ETSI NFV, and a management function that manage the lifecycle of the VNF including dynamic scaling for instance, as depicted in Fig 2. All these components need to be separately tested, and interoperability between these components need also to be tested, as well as conformance of their interfaces to given standard or implementation, i.e. ETSI NFV or OpenStack for instance for the VIM. And performance testing need also to be performed in the design phase to provide infrastructure and resource requirements, but also after deployment to validate that the allocation of resources meets the requirements and the VNF delivers the expected performance.

Fig 2. Evolution from monolithic product testing to NFV solution testing

Pre-validation testing

ETSI NFV has published a specification on pre-validation testing that provides guidelines to perform testing of an NFV environment, including testing of the NFVI, NFVI+VIM, MANO and VNF, see ETSI NFV TST001 for more details.

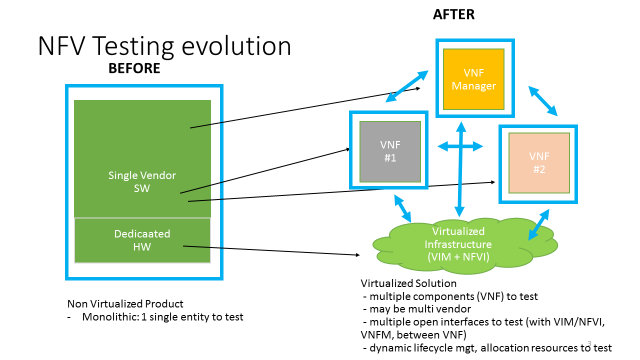

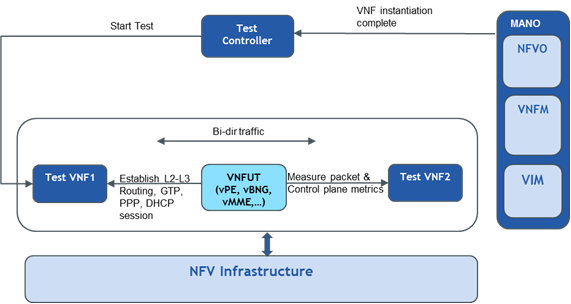

Typically for VNF testing, an NFV Testing environment needs to be defined, with a set of reference components that support the VNF: NFVI+MANO, a Test controller that will control the test execution and collect results, and test PNF/VNF that will inject traffic to the VNF Under Test (VNFUT). Then the VNFUT needs to be characterized, whether it is a data plane function or a control plane function, the VNF Package needs to be collected and validated, then the testing of the VNF Lifecycle Management can proceed as described in clause 7 of ETSI NFV TST001. Testing needs to be performed for VNF onboarding, instantiation, scaling and termination. Test scripts are defined, metrics are defined and test can be performed with the Test Controller and the test PNF/VNF interacting with the NFV environment and the VNFUT.

Fig 3. VNF Under Test (VNFUT) in an NFV testing environment

For instance for a Data Plane VNF, covering L2-L3, once VNF onboarding and VNF Instantiation have been performed, Validation of the Instantiation of the VNF is triggered by a Test initiated by the Test Controller asking a Test PNF1 or VNF1 to inject traffic in the VNFUT. A second test PNF2 or VNF2 then measure the packets and pre-defined metrics.

Fig 4. Validation the Instantiation of a L2-L3 VNFUT - source ETSI NFV TST001

Some ongoing work is currently being completed on VNF Package with some guidelines on VNF Package testing in ETSI NFV IFA011.

Interoperability Testing

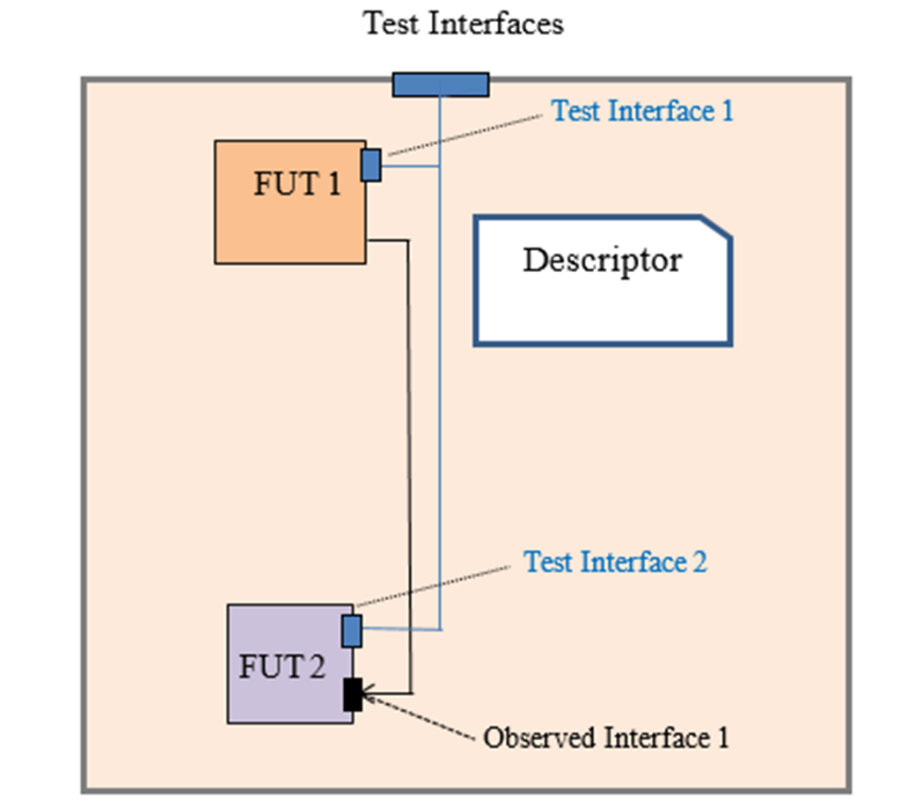

As VNF interact with many other elements in the NFV environment, NFVI, VNF Manager, other VNF, performing interoperability testing prior to deployment is also important, especially since different combinations are possible and environments may change dynamically during the course of life of a given VNF. ETSI NFV has defined methodology for NFV Interop test in ETSI NFV GS TST002 which is about to be published. Interoperability testing is testing the functional level, not the protocol. Entities in the test environment access the different Functions Under Test (FUT) via the Test Interfaces offered by the SUT. An SUT is typically composed of one or more FUT. In the case of Interop testing, it is a minimum of 2 FUTs that interop with each other. When the FUT is a VNF, the term VNFUT is used. In the example below in Fig 4, Interop test are performed between FUT1 and FUT2. The “observed interface 1” which connects FUT1 and FUT2 is tested via a set of test scripts that will exercise the SUT Test Interfaces that is sending traffic to FUT1 Test Interface for instance and collecting results on FUT2 Test Interface.

Fig 4. NFV Interop test example

ETSI NFV TST002 has defined a set of guidelines including the list of NFV Interoperability Features Statement (IFS) to test, according to ETSI NFV standard. This includes VNF Package Management, VNF SW Image Management , NS Descriptor Management , VNF Lifecycle Management , VNF Fault, Configuration and Performance Management , NS Lifecycle Management , NS Fault and Performance Management. The structure of a typical interoperability test description is providing an Identifier, test purpose, configuration, pre-condition, test sequence, IoP verdict etc – and then for each feature, details of the SUT configuration, the observed interface and the test interfaces are detailed. A few examples are also provided such as VNF onboarding with the call flow and the test description. ETSI NFV TST007 is now defining the Test Suite for these interoperability tests, current work has started for VIM-VNFM interoperability based on the following standard IFS defined in IFA006 on Vi-Vnfm: Software Image Management, Lifecycle Management, Network Resource Management, Fault and Performance Management. For each, the IFS is described, the Test Case Overview, and the Test Description. Test Suite will be provided in the annex and will be in line with the ETSI NFV PlugTest test plan. These test suites will be actual tests that the industry can use to perform interop test between 2 NFV entities as defined by ETSI NFV.

ETSI is organizing an ETSI NFV Plugtest early 2017. Vendors and participants will come with their NFV components (VNF, NFVI-VIM or VNFM-NFVO) to perform live interop tests as defined by ETSI based on ETSI NFV TST007 specifications. More details are available on www.etsi.org/nfvplugtest

Further work on NFV Testing

Besides the work detailed above, ETSI NFV has also published specification for performance testing guidelines with ETSI NFV Performance and Portability Guidelines, ETSI GS NFV-PER 001, and Service Quality Metrics, ETSI GS NFV-INF 010. Some work is also under way for Path testing, ETSI NFV TST004.

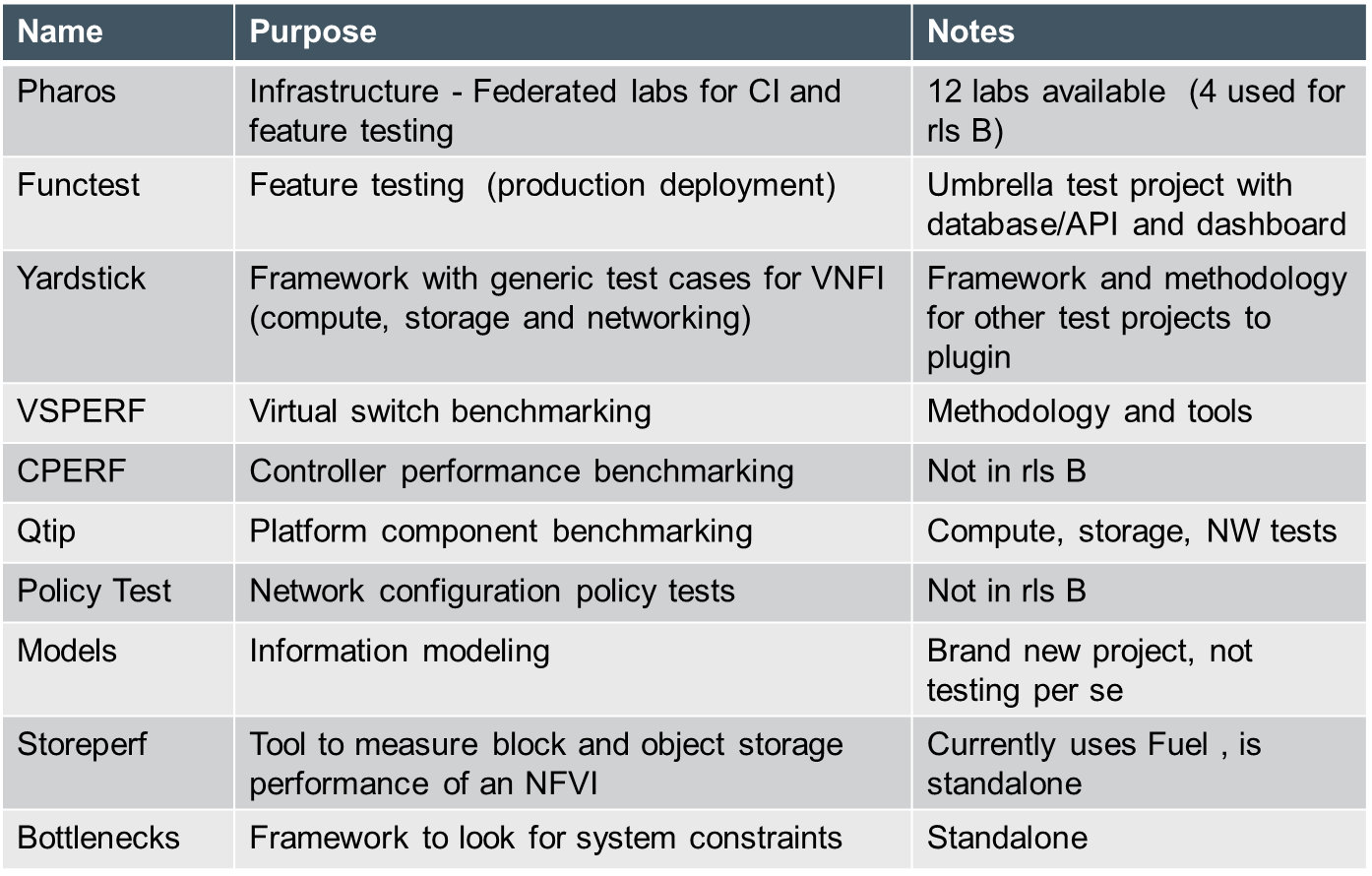

OPNFV OpenSource Project has also produced a number of tools for functional testing, vswitch performance testing and different solutions as part of the OPNFV CI/CD environment and used in OPNFV Pharos labs. This code is freely available under the OPNFV OpenSource project.

A few other standards have also produced some work that can be leveraged for NFV Testing, such as:

- IETF/IRTF NFV Benchmarking draft (draft-huang-bmwg-virtual-network-performance-01: “Benchmarking methodology for Virtualization Network Performance”),

- IETF devops draft (https://tools.ietf.org/html/draft-sonata-nfvrg-devops-gatekeeper-00 : “The Role of a Mediation Element in NFV DevOps”) or

- IETF SDN controller benchmarking drafts (https://tools.ietf.org/html/draft-ietf-bmwg-sdn-controller-benchmark-meth-02 : “Benchmarking Methodology for SDN Controller Performance“.

More on NFV Training with ETSI NFV Tutorial available on: http://www.layer123.com/nfv-webcast-mle123/

Marie-Paule Odini holds a master's degree in electrical engineering from Utah State University. Her experience in telecom experience including voice and data. After managing the HP worldwide VoIP program, HP wireless LAN program and HP Service Delivery program, she is now HP CMS CTO for EMEA and also a Distinguished Technologist, NFV, SDN at Hewlett-Packard. Since joining HP in 1987, Odini has held positions in technical consulting, sales development and marketing within different HP organizations in France and the U.S. All of her roles have focused on networking or the service provider business, either in solutions for the network infrastructure or for the operation.

Marie-Paule Odini holds a master's degree in electrical engineering from Utah State University. Her experience in telecom experience including voice and data. After managing the HP worldwide VoIP program, HP wireless LAN program and HP Service Delivery program, she is now HP CMS CTO for EMEA and also a Distinguished Technologist, NFV, SDN at Hewlett-Packard. Since joining HP in 1987, Odini has held positions in technical consulting, sales development and marketing within different HP organizations in France and the U.S. All of her roles have focused on networking or the service provider business, either in solutions for the network infrastructure or for the operation.

Editor:

Laurent Ciavaglia is currently senior research manager at Nokia Bell Labs where he coordinates a team specialized in autonomic and distributed systems management, inventing future network management solutions based on artificial intelligence.

Laurent Ciavaglia is currently senior research manager at Nokia Bell Labs where he coordinates a team specialized in autonomic and distributed systems management, inventing future network management solutions based on artificial intelligence.

In recent years, Laurent led the European research project UNIVERSELF (www.univerself-project.eu) developing a unified management framework for autonomic network functions. , has worked on the design, specification and evaluation of carrier-grade networks including several European research projects dealing with network control and management.

As part of his activities in standardization, Laurent participates in several working groups of the IETF OPS area and is co-chair of the Network Management Research Group (NRMG) of the IRTF, member of the Internet Research Steering Group (IRSG). Previously, Laurent was also vice-chair of the ETSI Industry Specification Group on Autonomics for Future Internet (AFI), working on the definition of standards for self-managing networks.

Laurent has co-authored more than 80 publications and holds 35 patents in the field of communication systems. Laurent also acts as member of the technical committee of several IEEE, ACM and IFIP conferences and workshops, and as reviewers of referenced international journals, and magazines.

Subscribe to IEEE Softwarization

Join our free SDN Technical Community and receive IEEE Softwarization.

Article Contributions Welcomed

Download IEEE Softwarization Editorial Guidelines for Authors (PDF, 122 KB)

If you wish to have an article considered for publication, please contact the Managing Editor at sdn-editor@ieee.org.

Past Issues

IEEE Softwarization Editorial Board

Laurent Ciavaglia, Editor-in-Chief

Mohamed Faten Zhani, Managing Editor

TBD, Deputy Managing Editor

Syed Hassan Ahmed

Dr. J. Amudhavel

Francesco Benedetto

Korhan Cengiz

Noel Crespi

Neil Davies

Eliezer Dekel

Eileen Healy

Chris Hrivnak

Atta ur Rehman Khan

Marie-Paule Odini

Shashikant Patil

Kostas Pentikousis

Luca Prete

Muhammad Maaz Rehan

Mubashir Rehmani

Stefano Salsano

Elio Salvadori

Nadir Shah

Alexandros Stavdas

Jose Verger